Map AI-Driven Attack Paths in AWS and Azure

Objective

This operational playbook outlines how to leverage the new AI-driven attack path mapping capabilities within Tenable Exposure Management to secure AWS and Azure environments.

As organizations integrate Generative AI and Machine Learning into cloud infrastructure, the attack surface expands to include model poisoning and data exfiltration. This playbook provides a structured approach to using Tenable to visualize and secure Amazon Bedrock, SageMaker, Azure Cognitive Services, and Azure Machine Learning.

Step 1: Asset Discovery

The first step is identifying all AI-related entities within your cloud footprint to understand the scope of your exposure. To accomplish this, you can use the Asset Query builder in the Attack Path section of Tenable Exposure Management to do the following:

-

Identify AWS AI Components: Locate AwsBedrockAgent, AwsBedrockCustomModel, and AwsSageMaker NotebookInstance entities.

-

Tag AI Workloads: Review AwsEc2Image (AMIs) and look for the is_ai flag to track specific AI-related workloads.

-

- Identify Azure AI Components: Locate the AzureCognitiveServicesAccount (including Azure OpenAI) and Azure Machine Learning Workspace entities

Map Relationships: Analyze how these entities connect to S3 buckets or Azure Storage accounts used for training input and model artifacts.

Step 2: Execute and Analyze AI-Specific Attack Path Queries

Once assets are discovered, use Tenable’s built-in queries to identify high-risk Technical Traps and Procedures (TTPs).

Key Monitoring Areas

| Threat Category | AWS Focus | Azure Focus |

|---|---|---|

| Data Poisoning and Manipulation | Identities with s3:PutObject on training buckets | Identities with Storage Blob Data Contributor roles |

| Cloud Storage Exfiltration | Read access to Bedrock Custom Model output buckets | Storage Blob Data Reader access to inference results |

| Lateral Movement | Unauthorized bedrock:InvokeAgent calls | Unauthorized listkeys actions on Cognitive Services |

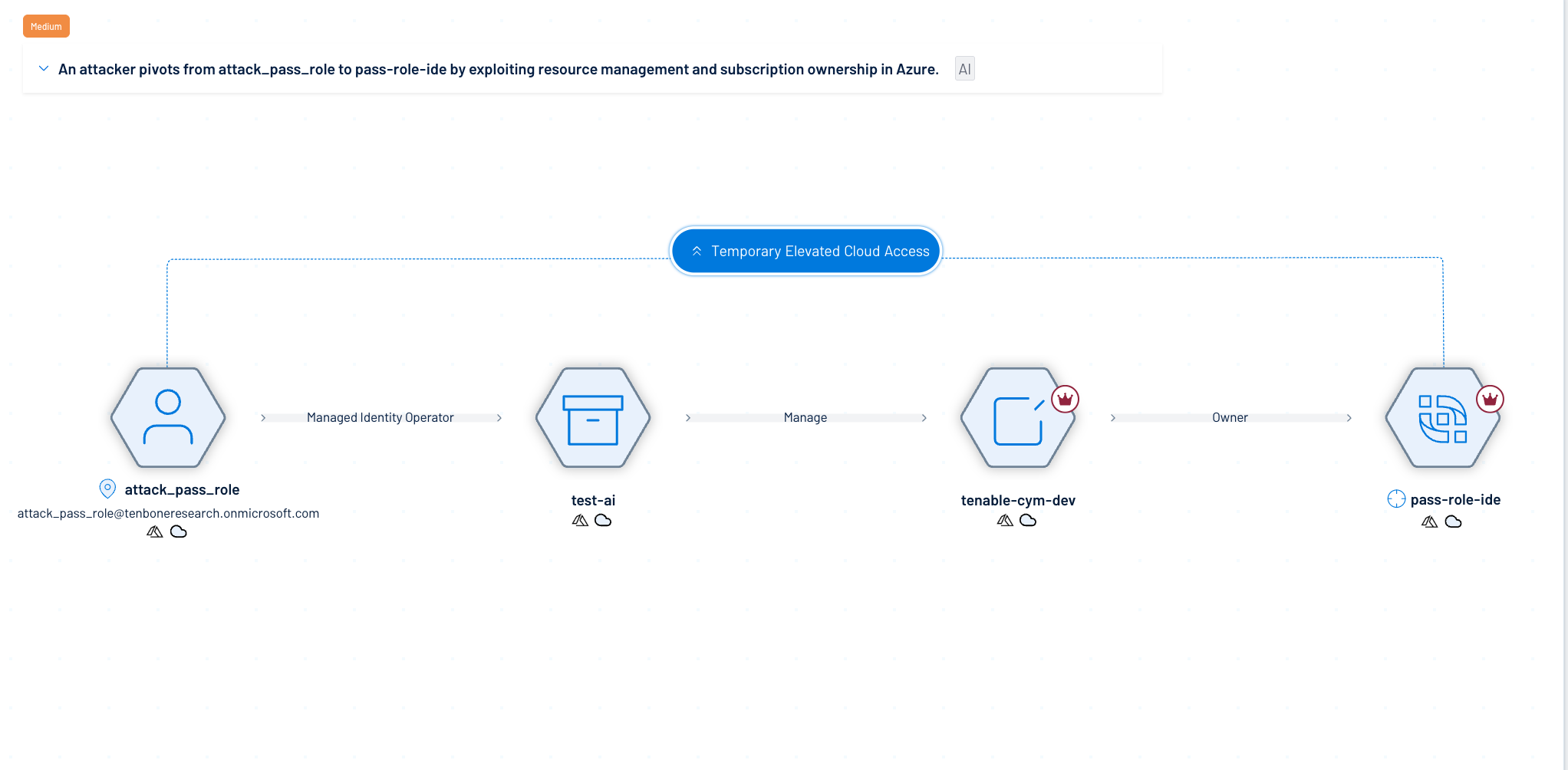

| Privilege Escalation and Role Assumption | IAM PassRole abuse via CreateStackMakerNotebook | Managed Identity abuse via compute instances |

The following built-in attack paths are available in the AI Infrastructure category. Generate these searches to achieve the following outcomes:

-

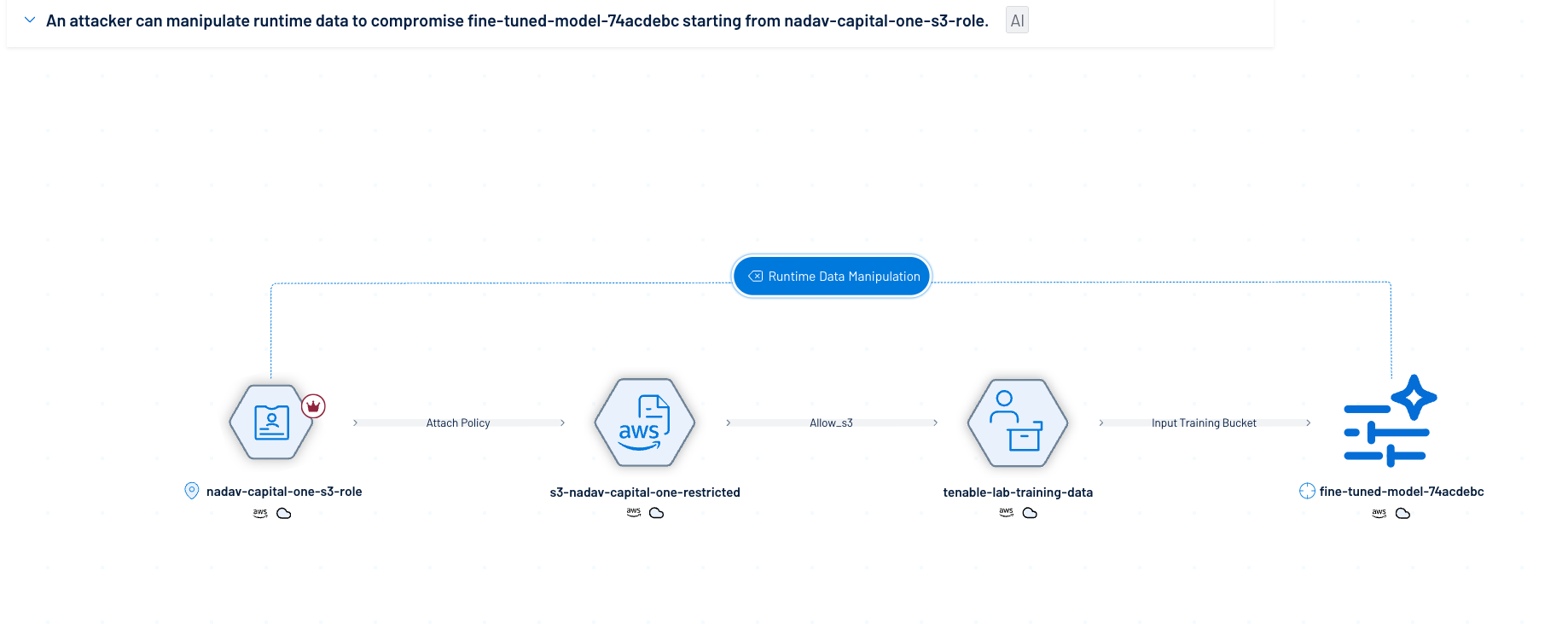

AI Training Data Poisoning: Identify IAM entities with write access to S3 buckets used as training input.

-

Internet to AI Services: Find paths leading from the Internet or External Assets directly to SageMaker, Bedrock, or Azure ML workspaces.

-

AI Service Role Abuse: Detect cloud resources with attached roles that can be abused via the Metadata API to access sensitive storage.

-

IAM Privilege Escalation: Spot identities that can escalate privileges by passing roles to AI service principals.

For example:

AWS

Azure

Step 3: Remediation and Hardening

Based on the attack paths discovered, implement the following security controls:

-

Restrict Storage Access: Apply the principle of least privilege to S3 buckets and Azure Storage accounts linked to ML pipelines to prevent poisoning (T1565.003).

-

Secure IMDS: Verify that AwsSageMaker and AwsBedrockAgent entities cannot unnecessarily access the metadata API to assume sensitive IAM roles.

-

Monitor Managed Identities: In Azure, audit ML workspaces with system-assigned identities to ensure they cannot pivot to Key Vaults or databases.